Why Traditional Monitoring Falls Short

Legacy performance monitoring often relies on isolated metrics: total distance, peak speed, session RPE. While useful, these measures offer limited resolution at the individual level. They fail to capture the dynamic, non-linear nature of real-time movement quality.

No single metric can fully characterize an athlete's readiness state. Load tolerance is a multidimensional puzzle — and traditional statistical approaches weren't designed to map this complexity.

Machine Learning for Biomechanical Intelligence

Machine learning algorithms are designed for exactly this type of problem. Rather than analyzing individual variables in isolation, ML models identify patterns across hundreds of features simultaneously — including interactions that manual analysis would miss.

Recent research has applied various ML approaches to athlete monitoring:

Random forests and gradient boosting machines for load pattern recognition

Neural networks for time-series movement analysis

Anomaly detection algorithms for identifying deviations from individual baselines

These approaches consistently outperform traditional threshold-based monitoring in identifying meaningful changes in movement quality.

From Raw Data to Actionable Intelligence

The power of ML in performance monitoring lies in synthesis. A single training session generates thousands of data points — positional coordinates, velocity vectors, acceleration profiles, biomechanical joint angles. No human analyst can process this volume in real time.

ML models can: Compare today's movement patterns against an athlete's historical baseline; Detect subtle efficiency changes across multiple biomechanical dimensions simultaneously; Contextualize current load within the athlete's individual tolerance profile; Surface insights that would be invisible to manual observation.

The output isn't a diagnosis — it's decision-support intelligence for performance staff.

PlayerGuard's Approach

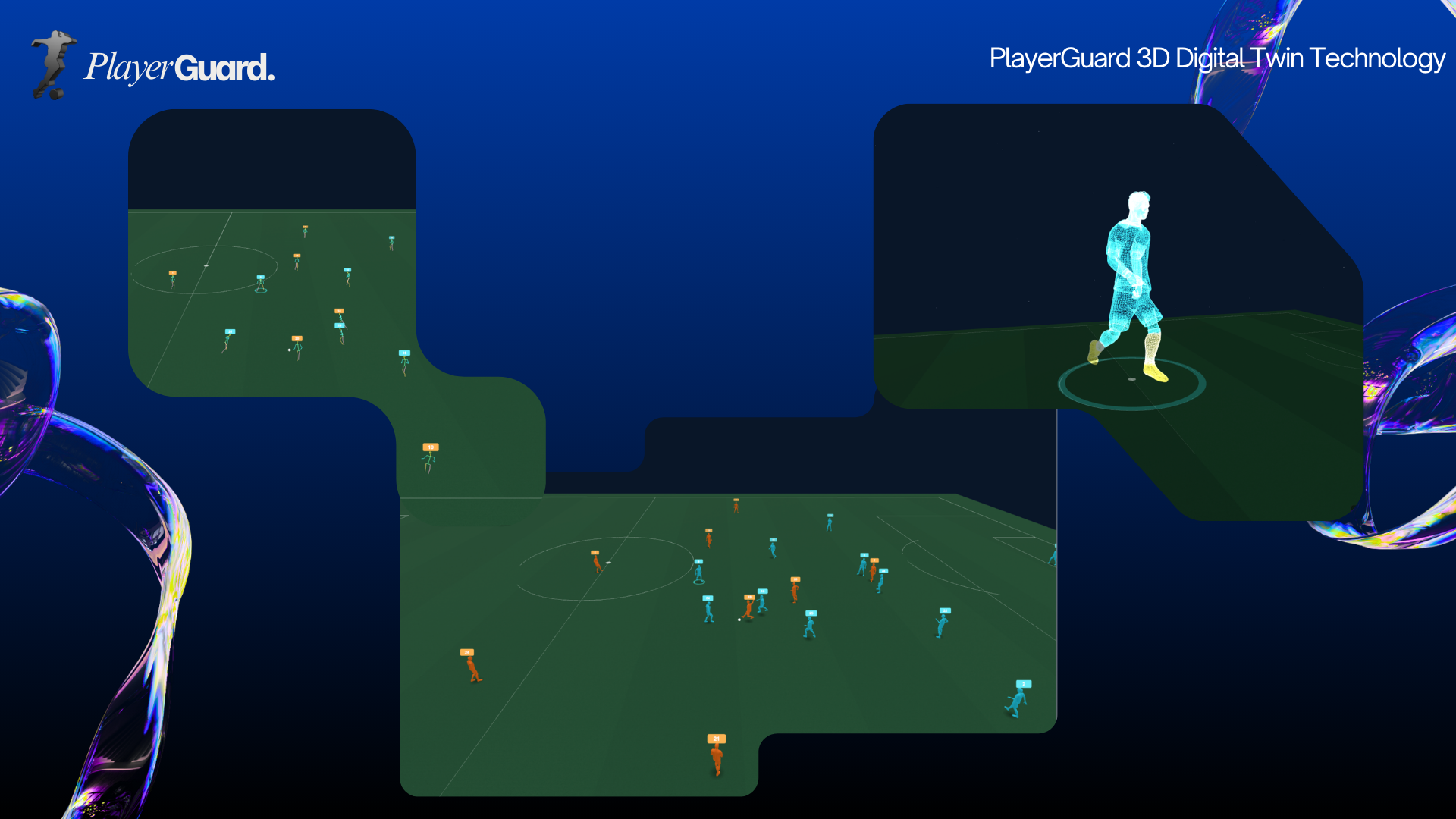

PlayerGuard employs an ensemble machine learning approach combining: 3D movement kinematics from skeletal tracking data; Load metrics from GPS and tracking systems; Historical performance patterns for individual baseline comparison.

Our models are trained to detect deviations in movement efficiency — changes in deceleration patterns, vertical load distribution, or asymmetry indices that indicate elevated mechanical stress.

Key principles of our approach: Individual baselines over population averages — Each player's 'normal' is different; Continuous learning — Models update as more data is collected; Contextual output — Readiness Scores presented within squad context, not as isolated numbers; Explainability — Every flag includes the biomechanical factors driving it.

Data Requirements and Model Integrity

The performance of any ML system depends critically on data quality and quantity. Most successful implementations use GPS/accelerometer data routinely collected in professional settings. Adding 3D biomechanical features — from motion capture systemssignificantly enhances analytical depth.

Validation matters. Many published models use cross-validation within a single dataset, which can overestimate real-world performance. PlayerGuard employs rolling temporal validation — models are continuously tested against future data they were not trained on. This approach ensures our outputs reflect genuine predictive value, not statistical artifacts.

Implementation Considerations

Even sophisticated analysis tools face adoption challenges. Outputs must integrate into existing workflows without adding friction for performance staff.

Effective implementation requires: Probability-based outputs rather than binary classifications — enabling staff to weigh information against other factors; Contextual presentation — showing where a player sits relative to their own history and squad averages; Actionable framing — not just 'elevated load' but 'elevated load driven by increased deceleration stress over the past 3 sessions'; Natural language interface — allowing staff to query the system directly rather than navigating complex dashboards.

PlayerGuard is designed around these principles, presenting Readiness Scores as decision-support tools rather than prescriptive directives.

Conclusion

Machine learning represents a significant evolution in how performance teams process and act on athlete data. The ability to synthesize high-dimensional information in real time — and surface patterns invisible to manual analysis — offers a genuine competitive advantage. However, ML is a tool, not a oracle. The most effective implementations combine algorithmic intelligence with expert human judgment. PlayerGuard is built to augment performance staff decision-making, not replace it. Organizations investing in robust data infrastructure and ML capabilities today will be best positioned as these technologies continue to mature.

References

- Bourdon PC, et al. (2017). Monitoring athlete training loads: consensus statement. International Journal of Sports Physiology and Performance.

- Buchheit M, Simpson BM. (2017). Player tracking technology: half-full or half-empty glass?. International Journal of Sports Physiology and Performance.

- Gabbett TJ, et al. (2017). The athlete monitoring cycle: a practical guide to interpreting and applying training monitoring data. British Journal of Sports Medicine.

- Bittencourt NFN, et al. (2016). Complex systems approach for sports performance and readiness. British Journal of Sports Medicine.